Table of contentsClick link to navigate to the desired location

This content has been automatically translated from Ukrainian.

Elasticsearch is a search and analytics system built on top of Lucene. It is often referred to as "Google inside your infrastructure" (in the sense of being a powerful search engine) because it can instantly find information in vast amounts of data - logs, texts, metrics, documents, products, etc.

How Elasticsearch Works Under the Hood

Indexing instead of regular storage

Data is not just placed "in a table," as in SQL, but is indexed: text is broken into tokens, normalized, and transformed into an inverted index. This allows searches to work in milliseconds even across millions of documents.

Scaling

Elasticsearch is designed as a distributed system. Its main feature is easy horizontal scaling.

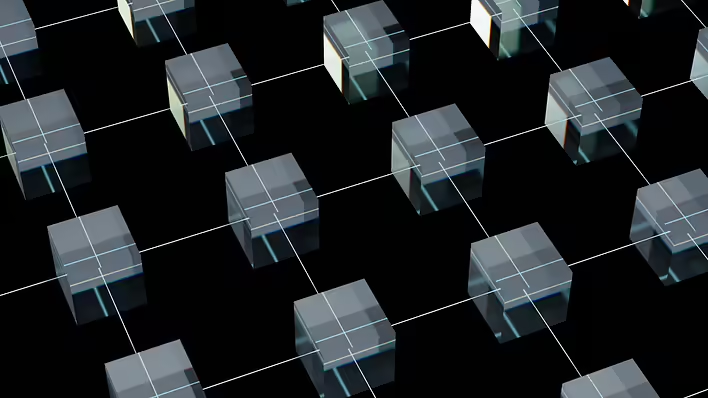

Shards - pieces of the index

Each index is divided into parts (shards), and each shard is a separate Lucene search engine. They can reside on different servers.

Replicas - copies for speed and reliability

Each shard can have copies. This means:

- the system does not go down if one server fails

- reading becomes faster because copies also respond to queries

Horizontal scaling

To increase performance or the amount of data, simply add a new node. Elasticsearch will automatically distribute shards and balance the cluster.

Distributed search

When a request comes in, the coordinating node sends it to all shards, collects the results, and returns the response. The search is performed in parallel - that’s why ES is so fast.

Elasticsearch in Logging: What is the ELK Stack

One of the most popular applications of ES is logging. For this, there is a stack:

E - Elasticsearch

Stores and indexes all logs.

L - Logstash

Processes logs: reads, filters, parses, structures, and sends them to Elasticsearch.

K - Kibana

Visualization: log search, graphs, filters, dashboards. This makes log analysis convenient and visual.

ELK is used for:

- error searching

- system behavior analysis

- understanding load peaks

- cybersecurity

- auditing

Analytics and Monitoring: Kibana + Beats

In addition to logs, Elasticsearch is increasingly used for metrics and monitoring.

Beats - lightweight agents that send data:

- Filebeat - log files

- Metricbeat - CPU, RAM, network

- Packetbeat - traffic

- Heartbeat - service availability

- Auditbeat - security events

They send data to Elasticsearch, and Kibana allows building dashboards, viewing system load, analyzing API requests, and finding slow services.

Elasticsearch is not just search. It is a distributed indexer that:

- searches text lightning-fast

- scales easily

- provides fault tolerance

- supports analytics and aggregations

- underlies popular stacks for logs and monitoring (ELK, Beats)

In the world of big data, Elasticsearch is one of the most convenient tools for quickly finding, filtering, and analyzing any information.

Can Elasticsearch be Used as a Database

Elasticsearch itself is a database. It does not use MySQL, PostgreSQL, or any other external DBMS. All data is stored as JSON documents, and physical storage and indexing are provided by Lucene.

It can indeed be used as a primary database, but only in cases where speed of search and analytics is central. This could be a product catalog, a logging system, storage of analytical events, or time-series data. However, Elasticsearch is not a full replacement for traditional relational databases. It lacks classic ACID transactions, relationships between entities, strict consistency control, and immediate visibility of records in search. Document updates are implemented as deletions and re-indexing, and deletions are not applied immediately but during internal optimizations.

Therefore, it is advisable to use Elasticsearch as a fast search and analytical layer, a separate time-series database, or the main log storage. For financial operations, transactions, complex relationships, and critically consistent data, traditional DBMSs like PostgreSQL, MySQL, etc., are better suited.

This post doesn't have any additions from the author yet.